Welcome to Tech Updates: #9.

This Newsletter is a deep dive into the tools and AI workflows shaping the digital frontier. I’m sharing my process of building and exploring in the open so we can navigate the future of tech together.You can read the updates below, or watch the video version on YouTube. Embedded here for your enjoyment!

Research and Learning:

Learning Minimax

Reading about Google Chrome browser mcp.

Reading about database MCP toolbox.

Learning picoclaw

Future Research to do:

commands to remember:

Nothing obvious this week.

Improvements:

Fixed Cloudflare routing by setting up 2 tunnels vs 1.

Got the art skills in PAI up and running with the API’s. Pretty cool.

Consolidated 2 home lab pages in Notion to 1.

Created homelab diagrams with PAI art skill.

Created a video on automated update of Davinci Resolve on Arch Linux

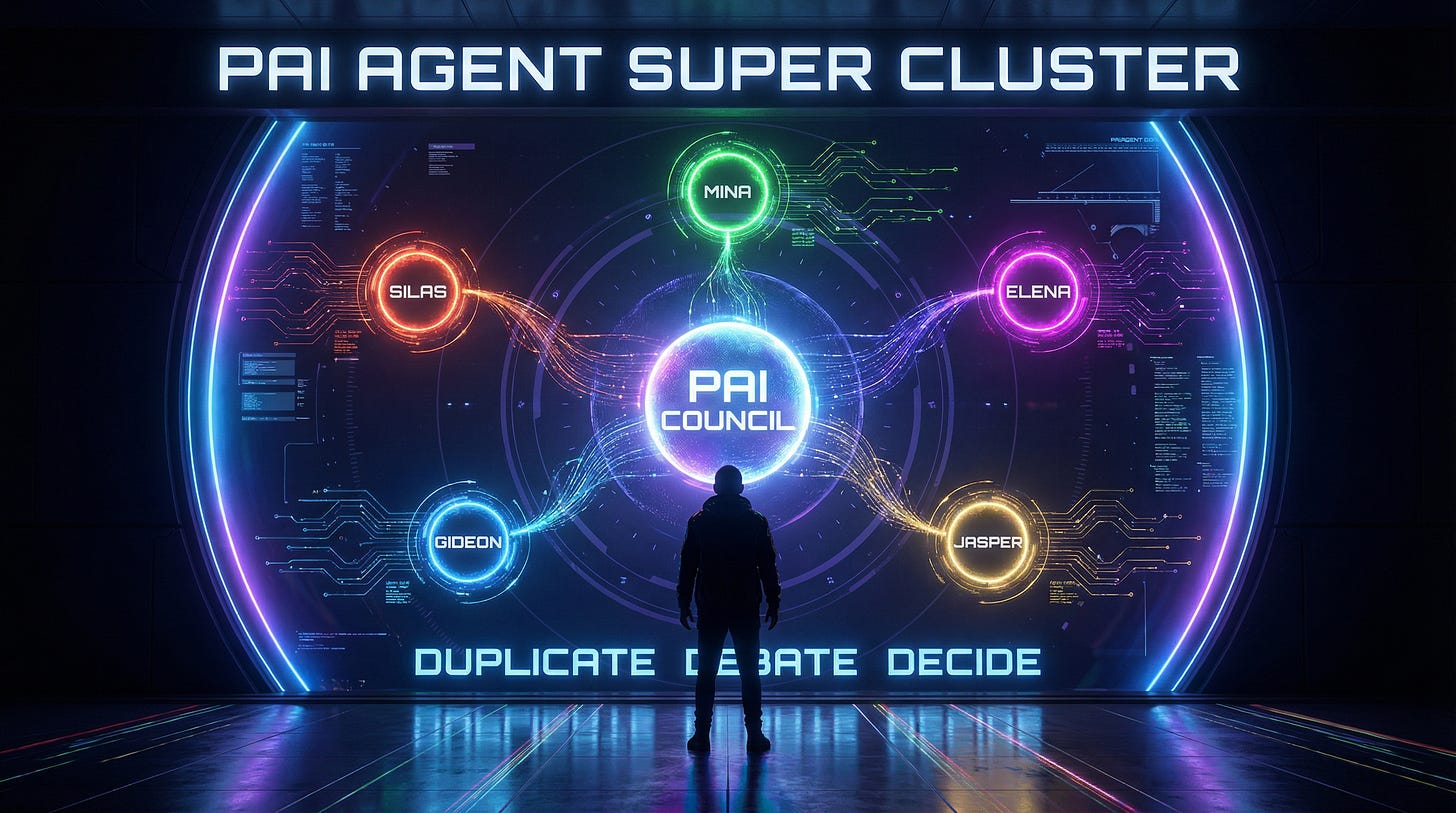

PAI upgraded to 2.5

Summary of Today’s Linux Fixes (PAI 2.5 Migration):

Voice Server: Migrated from ElevenLabs → Qwen3-TTS (local AI, no API costs)

Updated audio_player.py: afplay → mpv --no-video

Statusline Restored (3 fixes from PAI 2.5 regressions):

Location: IP geolocation → Hardcoded

Temperature: Celsius → Fahrenheit

MCP Count: Missing → Added with 10min cache

Git Repos Migrated:

PAI/USER: 44 modified files (templates → your customizations)

MEMORY: Session learning files from today

CustomAgents: Restored with clean working tree

AI Steering Rule Added: Web server 0.0.0.0 binding + IP display

Directory Structure: CORE → PAI paths updated throughout

Wrote a skill for PAI for grabbing local files easily when I am remote over SSH by sending them to Nextcloud.

Published it to GitHub after requests for it in forums.

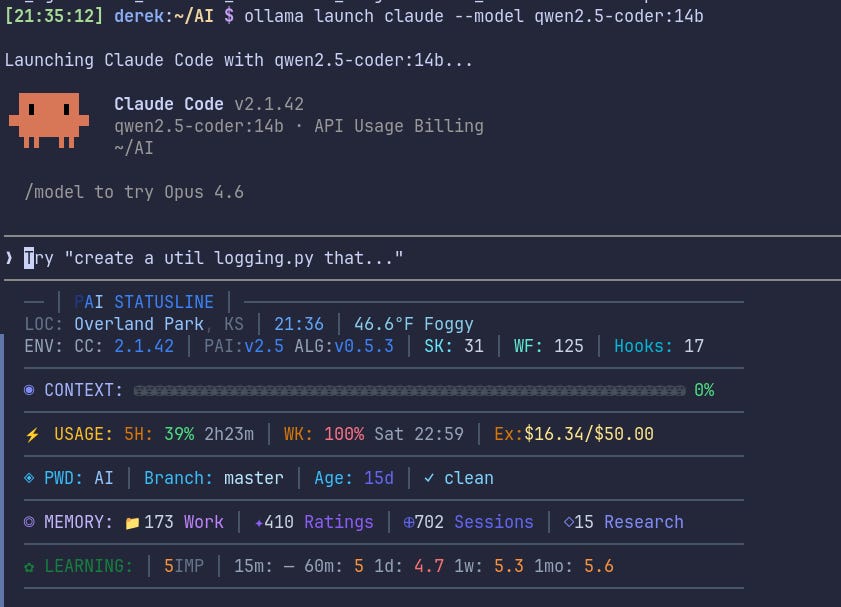

https://github.com/drake495/PAI-FileShare-skillUpgraded the algorithm in PAI to v0.5.3.

Created a video for installing PAI 2.5 on Arch Linux.

Updated local models for ollama. (local AI models)

qwen2.5-coder:14b │ Coding & technical │ 9.0 GB │ Best-in-class code at this tier │

phi4:14b │ Logic & math │ 9.1 GB │ Structured reasoning, underrated │

gemma3:12b │ General daily driver │ 8.1 GB │ Well-rounded, great at creative + conversation │

llama3.1:8b │ Fast fallback │ 4.9 GB │ Quick replies, leaves VRAM headroom │

All fit cleanly in 12GB.

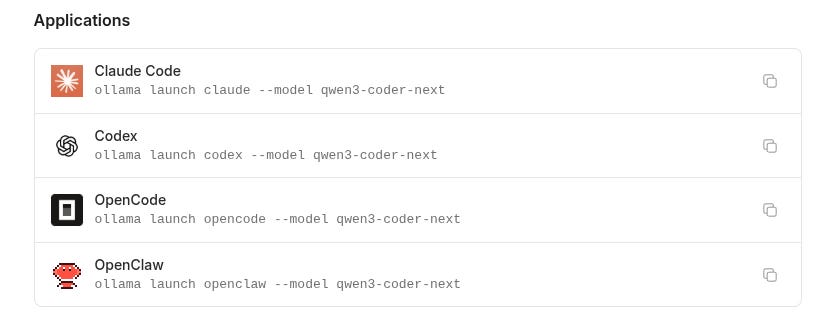

Got Picoclaw installed in a vm and pointed to Ollama for local LLM brain to save money.

Upgraded PAI to 3.0 and 1.5 Algorithm. https://github.com/danielmiessler/Personal_AI_Infrastructure

Got Claude code + PAI running on local model for brain vs OPUS

Mistakes:

Mostly grammar and typos when publishing content. lol.

That’s it for this week’s Tech Update. -Derek

Check out my Social Media Links, my Book, my Podcast, and my Substack below: